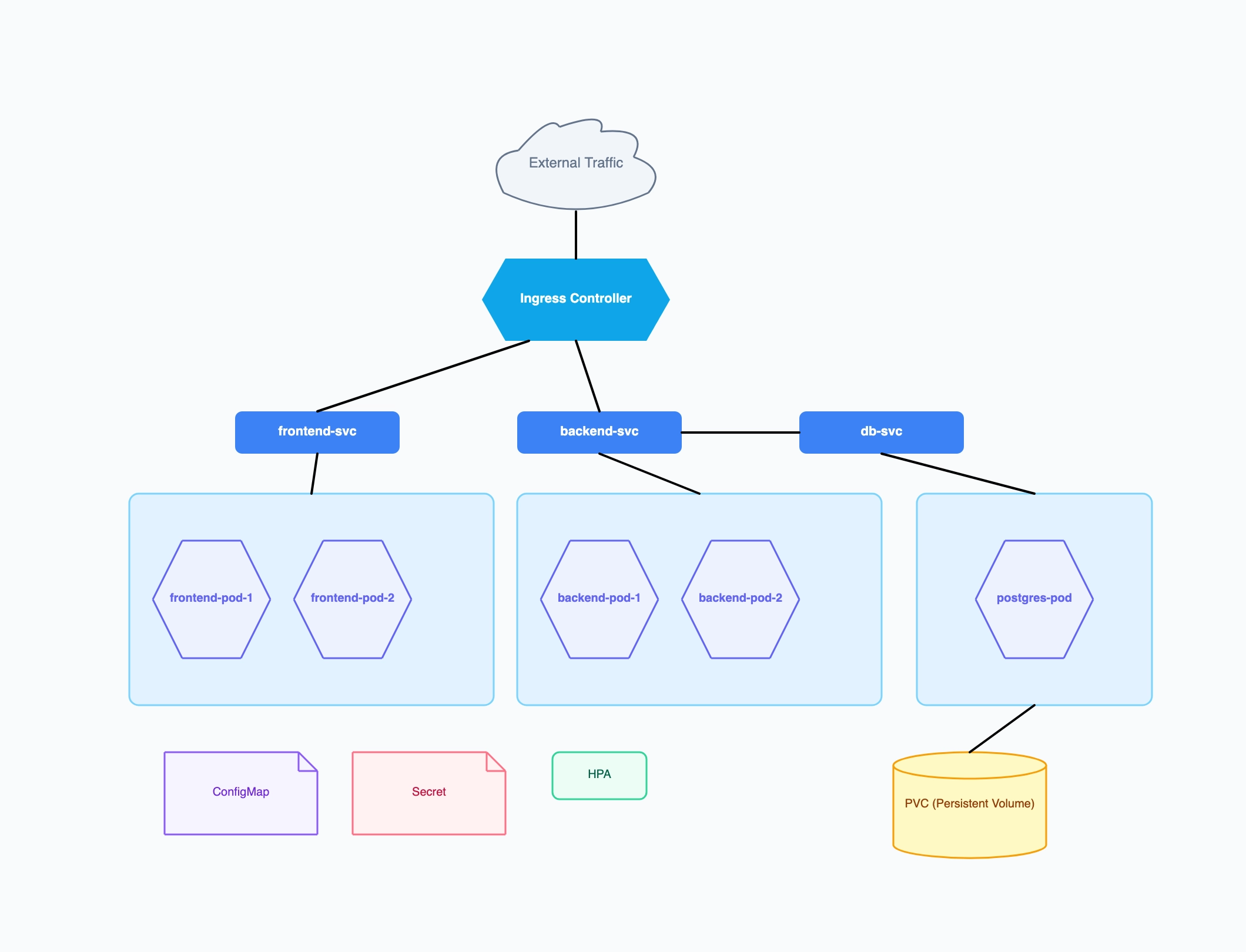

Three-tier web application

Who uses it: Backend engineer documenting a standard stateful web app deployment

Ingress: nginx ingress controller handling TLS termination

frontend-svc → 2 frontend pods (React app, Node.js SSR)

backend-svc → 2 backend pods (REST API, Python/Go)

db-svc → 1 postgres pod with PVC for durable storage

ConfigMap: environment variables; Secret: DB credentials

HPA on backend deployment, target CPU 70%

Why this works: Separating workloads across three dedicated worker nodes makes the blast radius of a node failure immediately visible — if Node 3 goes down, only the database is affected, not the stateless frontend or backend.

Microservices platform with service mesh

Who uses it: Platform engineer running 10+ services behind Istio

Ingress Gateway (Istio) as the single entry point

Namespace: auth → auth-service pod + redis-cache pod

Namespace: catalog → catalog-service + search-service pods

Namespace: orders → order-service + payment-service pods

Istio sidecar proxies injected into every pod

Prometheus + Grafana in monitoring namespace

Why this works: Grouping services by namespace in the diagram maps directly to Kubernetes RBAC and network policy boundaries — readers can immediately see which teams own which services and where network traffic is allowed to flow.

Data processing cluster (Spark on K8s)

Who uses it: Data engineer running batch jobs and streaming pipelines on Kubernetes

Spark driver pod → multiple Spark executor pods (dynamic allocation)

Kafka consumer pods in streaming namespace

MinIO pods for object storage (S3-compatible)

Spark History Server for job monitoring

Persistent Volume Claims for shuffle storage

CronJob resource triggering nightly batch runs

Why this works: For data workloads, showing the dynamic nature of executor pods (they scale up per job and terminate afterward) alongside the persistent infrastructure (Kafka, MinIO) helps the team understand which costs are fixed and which are variable.

Student lab cluster for learning Kubernetes

Who uses it: Computer science student or bootcamp grad setting up a local or cloud lab

Single worker node (minikube or kind)

nginx-pod exposed via NodePort service

mysql-pod with hostPath volume (lab-grade storage)

Deployment and ReplicaSet resources labeled

kubectl port-forward for local access

Why this works: Even a minimal one-node lab cluster benefits from a diagram — it forces the learner to think about the relationship between Deployment, ReplicaSet, and Pod before touching kubectl, which prevents the common confusion about why deleting a pod doesn't stop it from coming back.

Multi-tenant SaaS cluster

Who uses it: SaaS company running one cluster for multiple enterprise customers

Namespace per tenant with LimitRange and ResourceQuota

Shared ingress controller with host-based routing

Shared monitoring stack in ops namespace

Tenant-isolated databases with separate PVCs

Cluster-level RBAC roles vs namespace-level roles

Why this works: Multi-tenant diagrams help compliance and security reviewers verify that tenant isolation is enforced at the namespace level rather than relying only on application-layer access controls.