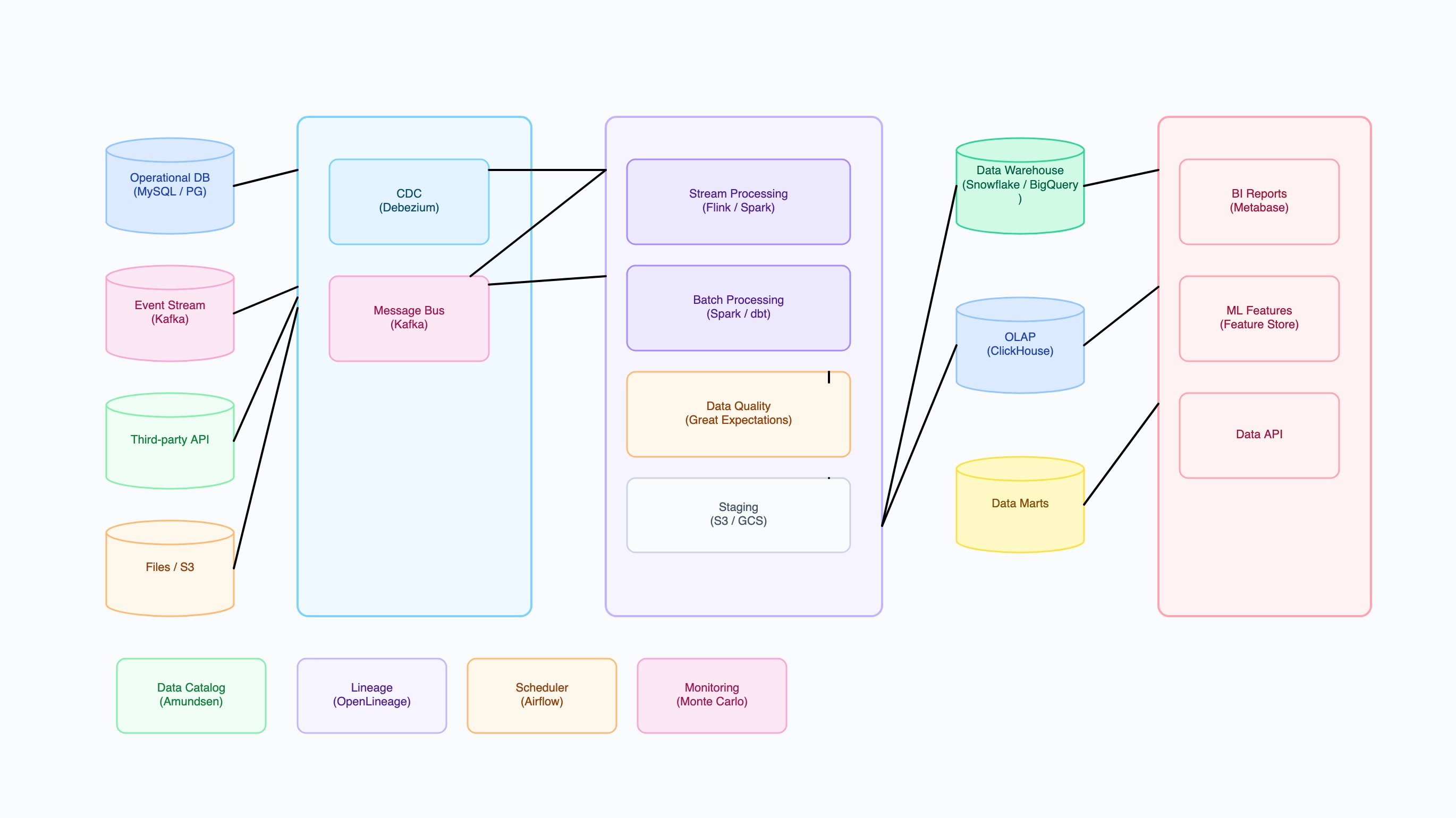

E-commerce analytics platform

Who uses it: Data engineer at a mid-size e-commerce company handling 1M+ orders/day

Why this works: Separating raw, staging, and analytics schema layers in the warehouse means a broken transformation job only affects downstream consumers of that layer — the raw data remains intact and can be re-processed without re-ingestion.