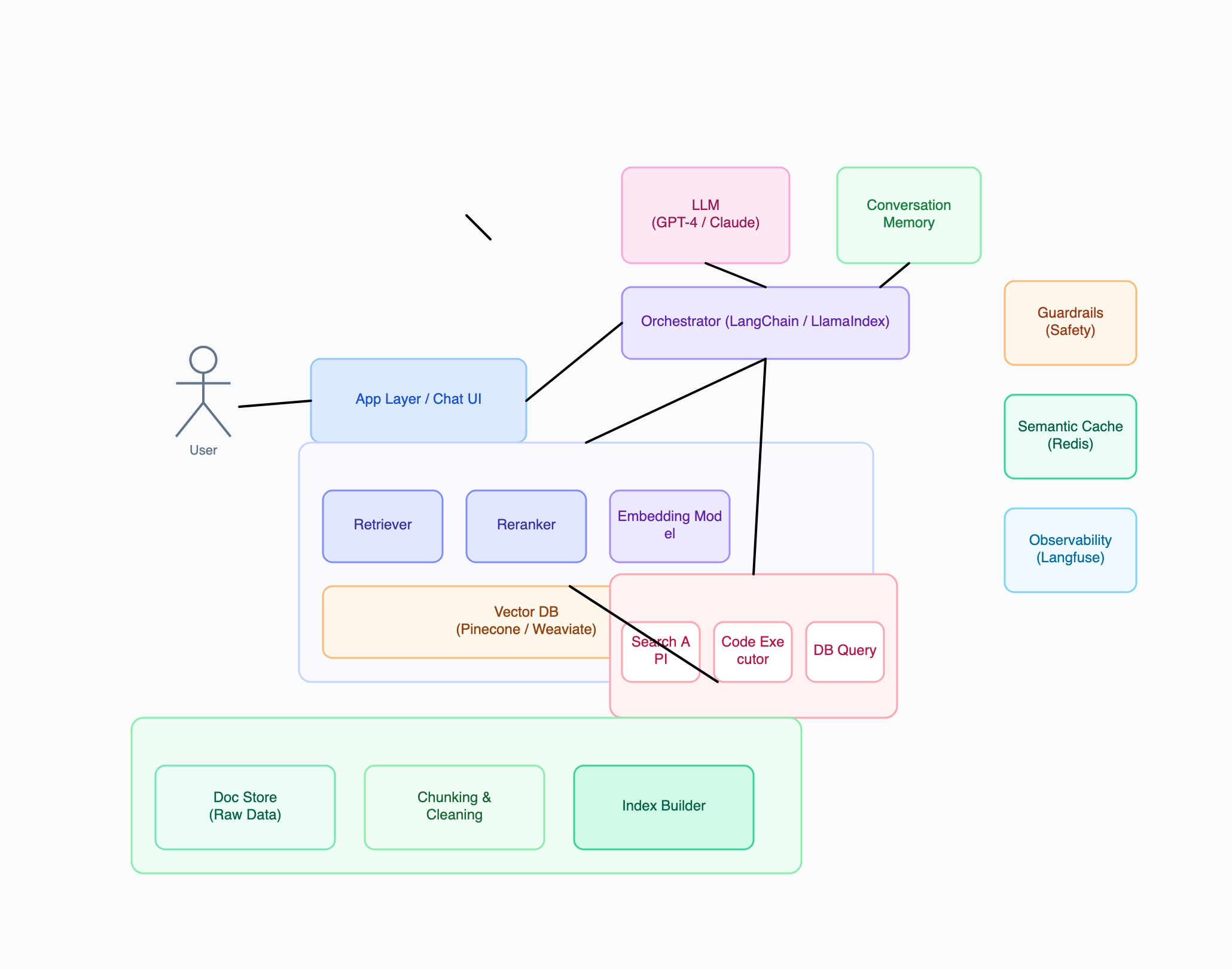

Enterprise document Q&A system (RAG)

Who uses it: ML engineer building an internal knowledge base chatbot

Why this works: Enterprise RAG systems need the full stack — guardrails catch sensitive data before it reaches the LLM, the reranker improves precision when the document corpus is large and noisy, and per-department cost tracking justifies the infrastructure spend.